If we gaze upon the past, we’ll notice that all great technologists focused on certain points, which they believed were key in advancing. One of the primary points among these, was precision. From the first ever powdered guns, to Swiss watches, to capacitive touchscreens, and so on, mankind has strived to make each and every device, more precise. Thus, considering the point of focus of all great tech pioneers of the past, Virtual Reality companies have given their best to make efficient the usage of all current products.

One complaint heard time and again by users across the globe is: “The visual isn’t accurate, it doesn’t follow my movements properly.” It is common, considering VR is still in it’s early stages, to find irregularities in the experience, often in the form of flickering objects, spacial errors, sluggish tracking by the headset and difficulty in interacting with in-game items. To counteract this problem however, VR headset developers have found a promising solution, Eye Tracking.

The Gist

So let’s get the general gist shall we? What is visual tracking? Well, simply put, eye tracking is a sensory technology. The purpose of Eye tracking is to detect the movement of your iris and pupils, along with the eye’s resting position and dilation to get an idea of presence and attention, which helps us to understand where the user is focusing more. The more accurately we can measure them, the better our chances are of creating more accurate visual systems.

Foveated Rendering

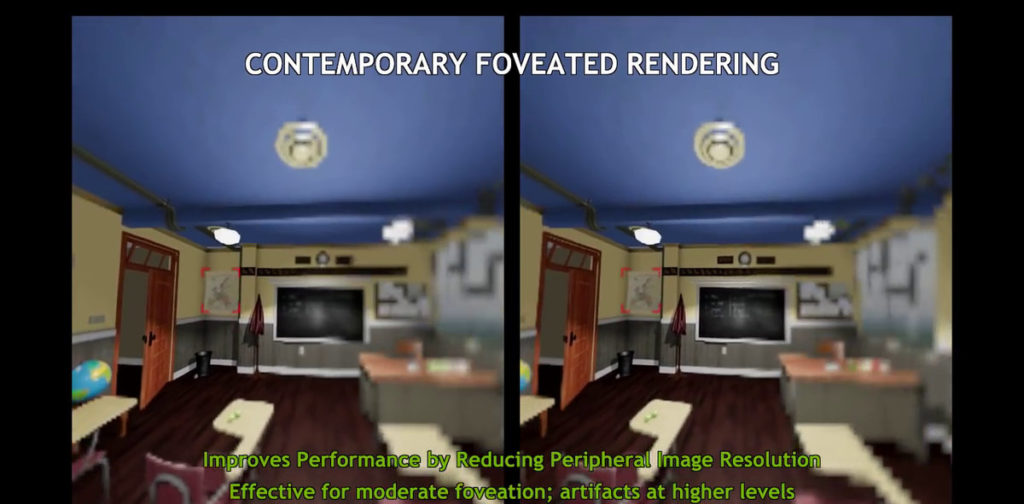

One of the reasons why VR games and immersive experiences are of low quality, is because they utilize two screens to create an elongated, panoramic image which the user views as a singular body from one side to the other. This uses a major chunk of the GPU, and hence, developers prefer to create lighter graphics, such that they aren’t a burden on the GPU. However, with Eye tracking, users need not produce a panoramic image, but instead, smaller, high quality segments of one large image, only in the direction in which the user is looking, while the periphery becomes a low resolution version of the same image. This means, that instead of the entire image, the GPU only has to produce a small, which is of high quality, without being a burden on the GPU itself. This process of producing images only where the user’s eyes are looking, is called foveated rendering.

Natural Interactions

With merely head tracking, VR games can achieve an idea of where a user wishes to either interact with an object, whether it is at a distance or up close, but with eye tracking, developers will be able to get a more accurate account of where the user wants to interact with an object. This can lead to more detailed in-game objects and complex level design.

The FOVE Story

In 2014, a kickstarted campaign from Tokyo, initiated by Yuka Kojima alongside Lochlainn Wilson, created the world’s first precise eye-tracking VR headset. With a partnership with REWIND, FOVE debuted it’s Project Falcon AAA quality headset at Tokyo Game Show 2016, showing off both positional and eye tracking. The experience shown to the world that day spoke for itself, eye tracking was indeed the next big step in VR experiences.

The Future

Now that the world knew what eye tracking meant for VR, the bandwagon had rolled, and everyone was getting on board, with better, more precise technology making way. Eyefluence, SMI, HTC, all began to pave the way for future VR headsets with innovative devices such as the S.M.I and Vive Focus. The future, as it seems, for eye tracking and VR is beginning to unfold, and as this technology begins to mature, we can certainly expect mind blowing devices to come.